Who needs 100% accuracy when 90% is more than enough?

More often than not, practical, on the ground applications, that become very popular in use, depend on one simple algorithm that beats all complicated ones developed by scientists to impress other scientists.

This is the story of someone who happens to be at the intersection of two important fields: engineering and data science.

Let me tell you something, if I want to develop a complicated neural network that does the desired task with an added accuracy/efficiency of 5% I would drop the idea before I even start. Let me give you an example.

Designing the fire alarm system is one of the most tedious tasks in engineering design. I heard so many ideas on how to automate the process by taking the dimensions of each room and make sense out of them, or at times, using machine learning to perform the task by gathering data from previous projects and so on.

While these ideas make sense, they actually don't (for the time being).

|

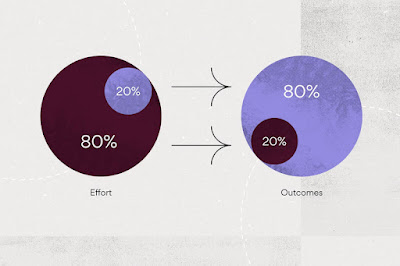

| Source: https://asana.com/resources/pareto-principle-80-20-rule |

One night, while I was at the office with a tight schedule at hand for an upcoming fast track project that was supposed to be delivered in 1 week, I decided to go home although common sense back in the day was to spend more time on the task. I had a feeling that if I get enough sleep I would find a trick that would help me finish the task before time. And that's when it clicked on the next morning: why not see if the Pareto Principle applies to the system: and just like that, I exported the data of the project and a previous project and simply made two graphs that changed the way I, and others, design the system forever. These graphs were:

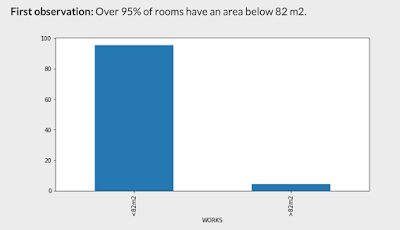

1) The percentage of rooms in the project that are 82 m2 and less

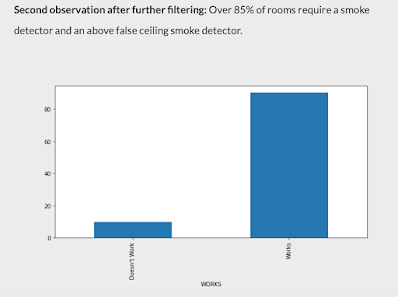

2) The percentage of smoke detectors compared to other types of detectors that are used.

The result was around 95% and 85% respectively.

A simple processing of data cracked the problem for me:

Why not design a code that finds rooms less than 82 m2 in the project and place a smoke detector in them. A code that doesn't require more than 15 minutes to develop achieved 90% of time savings. The deadline for submission was on Wednesday, by Monday I was done, with no overtime or working on weekends and with no complicated codes that would be buggy and less capable of generalization to other future projects.

While this might be very simple and intuitive, some things are not. Let me give you an example. Last year, one of the clients required we build a model that estimates the consumption of the air handling unit to find out potential savings and/or overconsumption and faults in the system. While the initial ideas for building that model consisted of complex machine learning and deep learning algorithms with complicated features in addition to endless mathematical equations, Elie Habboub and I, both of us studying data science with at least 5 years of engineering experience, proposed a simple nonlinear regression model with only 4 variables that can be found in any future AHU. We trained the model and it performed well, how well? Well, let's say 80% of the time. But as soon as it was released for testing, it started deviating from the actual performance model, while others saw this as an error, we saw that as a signal, confident as we were of our model, it turned out that the system was in fact faulty and was over-consuming. Why were we confident that the problem was not in our model and rather the AHU? Because it was very simple and straightforward to deal with our model, unlike other more complex models that had a lot of engineering equations and complex deep learning algorithms.

What was also cool, was that when our model was used for another AHU, rather than be less accurate, it was more accurate.

We as engineers know what data we have by simply looking at it, and instead of devising complicated statistical models to infer from our data, we can developed simple constraints that can show when something is going wrong or right, without the need for unsupervised learning and other more complicated techniques.

But don't get me wrong, I love unsupervised learning, I actually used it a lot, especially in applications when I need randomness in feature extraction to see the various options for each run. But that's a privilege for me who understands unsupervised learning, unlike other engineers who don't know what it means and can't make sense of its results.

If you know your data and field, data science can make you unstoppable. But if you are the best data scientist out there, with no other field to couple your data science skills with, you'll be trying hard to impress other data scientists with no substantial value in the market or the real world.

Solving one riddle each week from the probability book "Cut the Knot" inspired me to think this way.

Buy yourself one and solve at least one on weekends, you'll see that less is more and that simplicity is beautiful.

Peace,

Edmond